Instagram Strengthens Teen Safety With Search Alerts for Parents

In the coming weeks, Instagram will roll out alerts for parents whose kids may search topics related to self-harm or suicide

Starting this March, Instagram will introduce a new alert system designed to notify parents if their teens search for terms related to suicide or self-harm—part of Meta’s continued efforts to strengthen safety measures for young users and support families navigating the digital world.

As conversations around teen mental health and social media intensify, many parents have called on tech companies to take a more active role in protecting young users. In response, Meta, Instagram’s parent company, announced that parents enrolled in its parental supervision tools will begin receiving notifications if their teen searches for concerning terms related to self-harm or suicide.

The update builds on Instagram’s existing Teen Accounts framework, which gives parents greater visibility into their child’s activity while also connecting teens to appropriate support when they need it most.

How the alerts work

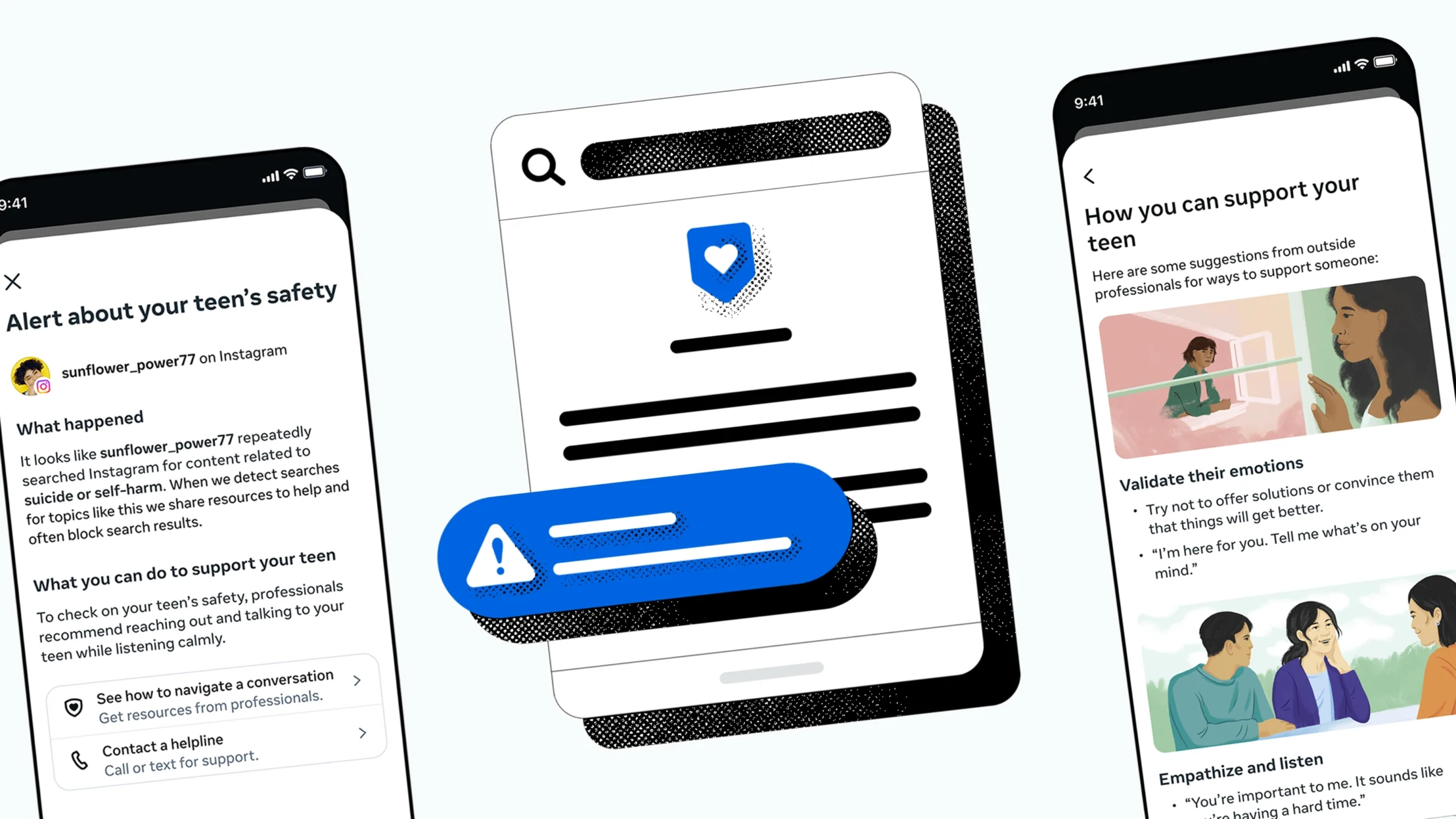

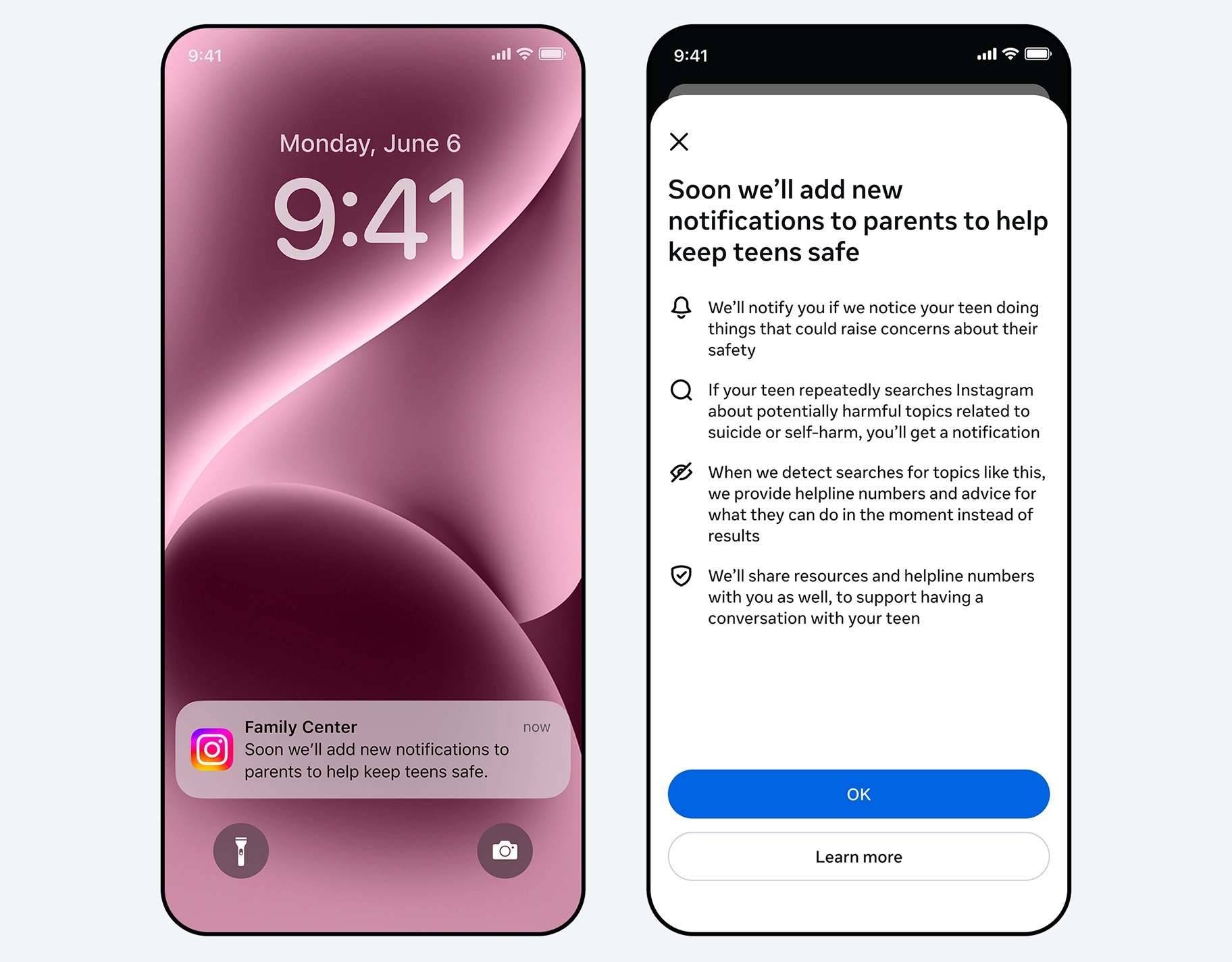

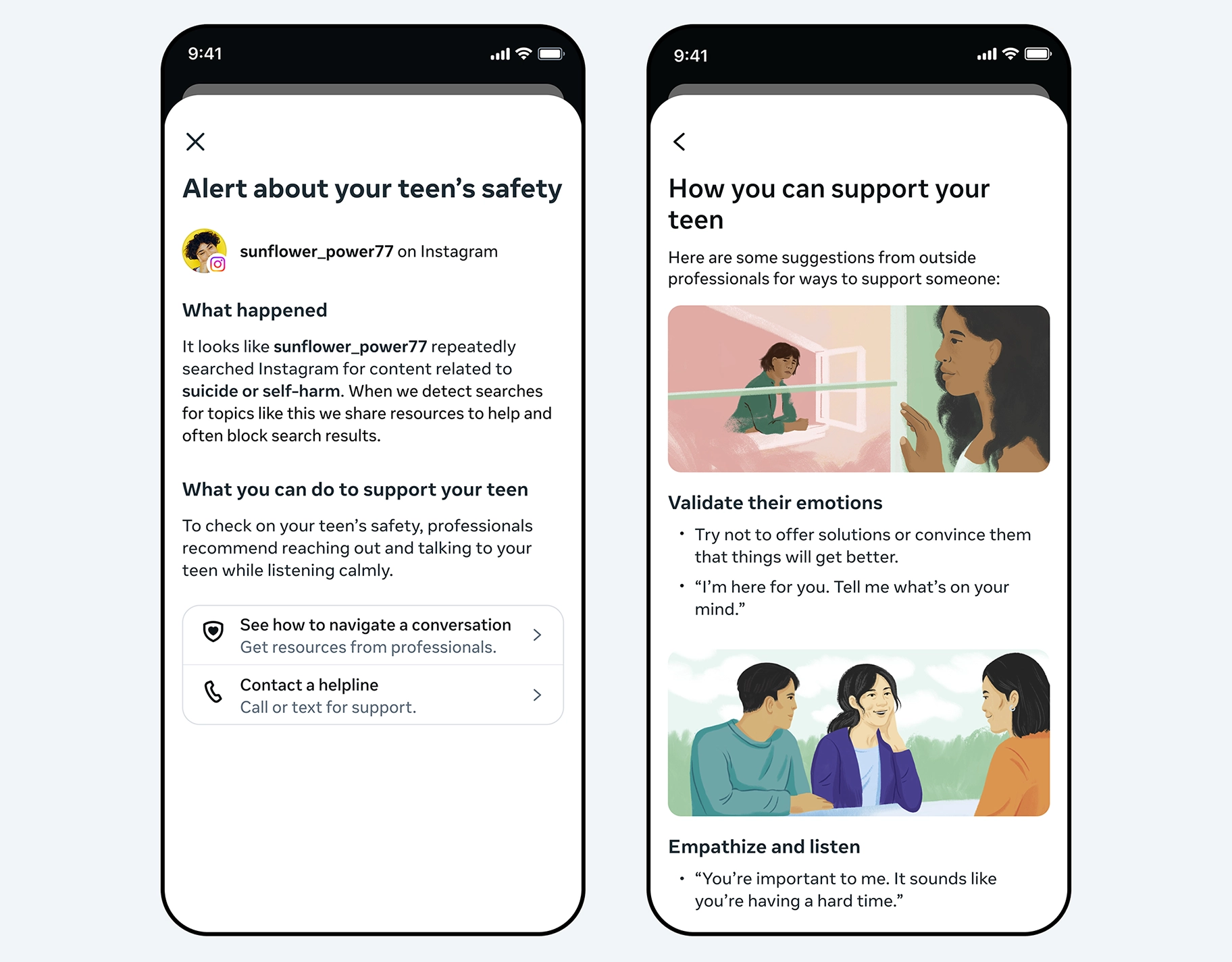

For families who have enabled supervision, both parents and teens will receive alerts if certain sensitive search terms are used. These notifications may be delivered via email, text message, WhatsApp, or in-app alerts, depending on the parent’s contact settings.

When parents tap the notification, they’ll see a prompt explaining that their teen recently searched for concerning terms. The alert will not provide extensive detail but will offer enough context to encourage timely and supportive conversations. Parents will also be directed to expert-backed resources to help them approach the topic with care and understanding.

Instagram emphasizes that while most teens do not use the platform to search for harmful content, the company has systems in place to block such searches and instead guide users toward crisis helplines and professional support.

Initially, the feature will roll out to supervised accounts in the United States, Canada, Australia, and the United Kingdom, with plans to expand to more regions later this year.

Supporting parents while respecting teens

Meta says the goal is to empower parents without creating unnecessary alarm. The company worked with mental health experts and partner organizations to ensure the alerts are thoughtful, measured, and focused on early intervention.

“These alerts are meant to provide parents with awareness and resources, while still encouraging open communication between families,” the company shared.

The new system builds on Instagram’s existing safeguards, including strict policies against content that promotes or glorifies self-harm. While teens may still encounter discussions about mental health and recovery, such content is limited, and harmful material is restricted or hidden from younger users.

Preparing for the future of digital support

Meta also acknowledged that many teens are increasingly turning to AI tools for emotional support. In response, the company is developing similar parental alerts for certain AI-related interactions on Instagram.

While its AI systems are already designed to respond safely and direct teens to appropriate resources, future updates may notify parents if a teen engages in conversations with AI that involve self-harm or suicide-related themes.

For parents, this update reflects a growing shift in how tech platforms are approaching youth safety—not by replacing parental guidance, but by offering tools that help families stay informed and connected.

In today’s digital landscape, awareness is one of the most important tools parents have. And while technology can’t replace meaningful conversations at home, features like this may help open the door when support is needed most.

Frequently Asked Questions

Instagram’s new feature notifies parents if their teen searches for terms related to suicide or self-harm. The goal is to help parents become aware and offer support when needed.

Parents who have enrolled in Instagram’s parental supervision tools will receive alerts. Teens under supervision will also be aware that these alerts are enabled.

Parents may receive notifications through email, text message, WhatsApp, or directly within the Instagram app, depending on their notification settings.

The alerts will initially roll out in the United States, Canada, Australia, and the United Kingdom, with plans to expand to other regions later.

Instagram blocks or limits harmful content and instead directs teens to trusted resources, helplines, and professional support.

More on technology and social media

YouTube Updates Parental Controls to Support Healthier Screen Habits

Why Today’s Kids Aren’t As “Tech-Literate” As Many Believe

Why Being Tech-Savvy is an Essential Parenting Skill in the Philippines